I made a thing for 0h game jam

http://thatsnail.com/textadventure/

(it took 6 hours)

Sunday, November 3, 2013

Monday, July 29, 2013

MITRE 2013 Writeup : Network 200

Wireshark .pcap file shows a bunch of TCP and HTTP requests, most notably to vimeo.com. Saving the TCP stream into a file...

HTTP/1.1 200 OK

Server: Apache

X-Powered-By: PHP/5.4.14

Expires: Sun, 14 Jul 2013 21:17:37 GMT

Vary: User-Agent,Accept-Encoding

X-Frame-Options: SAMEORIGIN

X-DNS-Prefetch-Control: on

Content-Encoding: gzip

Content-Type: text/html; charset=UTF-8

Transfer-Encoding: chunked

Date: Sun, 14 Jul 2013 20:17:37 GMT

X-Varnish: 155913018

Age: 0

Via: 1.1 varnish

Cache-Control: no-store, no-cache, must-revalidate, post-check=0, pre-check=0

X-Varnish-Cache: 0

Connection: close

X-VServer: 10.90.128.147

...and loading it up onto localhost via ncat...

elliptic@elliptic:~/solved/network200$ sudo ncat -l 80 < stream

...yields the vimeo video.

01010100 01101000 01100101 00100000 01101011 01100101 01111001

T h e k e y

00100000 01101001 01110011 00100000 01001101 01000011 01000001

i s M C A

00101101 00110101 00111000 01000010 01000101 00110001 00110100

- 5 8 B E 1 4

00110111 01000001 00100000 00111010 00101001

7 A : )

MCA-58BE147A

HTTP/1.1 200 OK

Server: Apache

X-Powered-By: PHP/5.4.14

Expires: Sun, 14 Jul 2013 21:17:37 GMT

Vary: User-Agent,Accept-Encoding

X-Frame-Options: SAMEORIGIN

X-DNS-Prefetch-Control: on

Content-Encoding: gzip

Content-Type: text/html; charset=UTF-8

Transfer-Encoding: chunked

Date: Sun, 14 Jul 2013 20:17:37 GMT

X-Varnish: 155913018

Age: 0

Via: 1.1 varnish

Cache-Control: no-store, no-cache, must-revalidate, post-check=0, pre-check=0

X-Varnish-Cache: 0

Connection: close

X-VServer: 10.90.128.147

...and loading it up onto localhost via ncat...

elliptic@elliptic:~/solved/network200$ sudo ncat -l 80 < stream

...yields the vimeo video.

01010100 01101000 01100101 00100000 01101011 01100101 01111001

T h e k e y

00100000 01101001 01110011 00100000 01001101 01000011 01000001

i s M C A

00101101 00110101 00111000 01000010 01000101 00110001 00110100

- 5 8 B E 1 4

00110111 01000001 00100000 00111010 00101001

7 A : )

MCA-58BE147A

MITRE 2013 Writeup : Binary 200 #2

ELF Linux executable file named Game.bin. Running it shows usage instructions that show that it takes one parameter:

elliptic@elliptic:~/solved/bin2002$ ./Game.bin

Usage: ./Game.bin

keyFile - The file containing your CD Key

Strings table and cat were both uninteresting. Disassembling the main function in GDB yielded some nice things.

. Stack initialization

0x0804896c <+0>: push ebp

0x0804896d <+1>: mov ebp,esp

0x0804896f <+3>: push ebx

0x08048970 <+4>: and esp,0xfffffff0

0x08048973 <+7>: sub esp,0x170

. If fewer than 1 parameter, jump to 0x80489AC

0x08048979 <+13>: cmp DWORD PTR [ebp+0x8],0x1

0x0804897d <+17>: jg 0x80489ac

. Usage

0x0804897f <+19>: mov eax,DWORD PTR [ebp+0xc]

0x08048982 <+22>: mov eax,DWORD PTR [eax]

0x08048984 <+24>: mov DWORD PTR [esp+0x4],eax

0x08048988 <+28>: mov DWORD PTR [esp],0x8049d90 ; 0x8049d90 = "Usage: %s\n"

0x0804898f <+35>: call 0x80487e0

0x08048994 <+40>: mov DWORD PTR [esp],0x8049da8 ; 0x8049da8 = "\nkeyFile - The file containing your CD Key"

0x0804899b <+47>: call 0x8048840

0x080489a0 <+52>: mov DWORD PTR [esp],0x0

0x080489a7 <+59>: call 0x8048870 ; end

. Body

0x080489ac <+64>: mov eax,DWORD PTR [ebp+0xc]

0x080489af <+67>: add eax,0x4

0x080489b2 <+70>: mov eax,DWORD PTR [eax]

0x080489b4 <+72>: mov DWORD PTR [esp+0x4],0x8049dd3 ; 0x8049dd3 = "rb"

0x080489bc <+80>: mov DWORD PTR [esp],eax

0x080489bf <+83>: call 0x80487d0 ; Opens some file with read ("rb") permissions; probably keyFile?

0x080489c4 <+88>: mov DWORD PTR [esp+0x160],eax

0x080489cb <+95>: cmp DWORD PTR [esp+0x160],0x0 ; Check if we can open the file

0x080489d3 <+103>: jne 0x80489ed

0x080489d5 <+105>: mov DWORD PTR [esp],0x8049dd8 ; 0x8049dd8 = "Error - couldn't open key file"

0x080489dc <+112>: call 0x8048790

0x080489e1 <+117>: mov DWORD PTR [esp],0x1

0x080489e8 <+124>: call 0x8048870 ; end

0x080489ed <+129>: mov eax,DWORD PTR [esp+0x160]

0x080489f4 <+136>: mov DWORD PTR [esp+0xc],eax ; file pointer

0x080489f8 <+140>: mov DWORD PTR [esp+0x8],0x1 ; number of elements in buffer (1)

0x08048a00 <+148>: mov DWORD PTR [esp+0x4],0x80 ; size of elements in buffer (0x80 = 128 bytes)

0x08048a08 <+156>: lea eax,[esp+0xb8]

0x08048a0f <+163>: mov DWORD PTR [esp],eax ; buffer

0x08048a12 <+166>: call 0x8048850 ; read elements into buffer

0x08048a17 <+171>: mov DWORD PTR [esp+0x15c],eax

0x08048a1e <+178>: cmp DWORD PTR [esp+0x15c],0x1 ; if successful (fread returns the number of elements successfully read)

0x08048a26 <+186>: je 0x8048a59

0x08048a28 <+188>: mov eax,ds:0x804a16c

0x08048a2d <+193>: mov DWORD PTR [esp+0xc],eax

0x08048a31 <+197>: mov DWORD PTR [esp+0x8],0x1f

0x08048a39 <+205>: mov DWORD PTR [esp+0x4],0x1

0x08048a41 <+213>: mov DWORD PTR [esp],0x8049df8 ; 0x8049df8 = "Error: couldn't read whole key\n"

0x08048a48 <+220>: call 0x80487f0

0x08048a4d <+225>: mov DWORD PTR [esp],0x2

0x08048a54 <+232>: call 0x8048870 ; end

Here, the program calls a bunch of really scary things like time and random, probably to compare against the key you enter. The correct key is 128 bytes of randomness? Probably not the key you're looking for.

0x08048a59 <+237>: mov DWORD PTR [esp],0x0

0x08048a60 <+244>: call 0x8048810

The more interesting part; why is it calling SDL functions? SDL is used for drawing.

0x08048b67 <+507>: mov DWORD PTR [esp],0x0

0x08048b6e <+514>: call 0x80487c0

0x08048b73 <+519>: test eax,eax

0x08048b75 <+521>: je 0x8048ba2

0x08048b77 <+523>: call 0x8048860

0x08048b7c <+528>: mov edx,DWORD PTR ds:0x804a16c

0x08048b82 <+534>: mov DWORD PTR [esp+0x8],eax

0x08048b86 <+538>: mov DWORD PTR [esp+0x4],0x8049e30

0x08048b8e <+546>: mov DWORD PTR [esp],edx

0x08048b91 <+549>: call 0x8048800

0x08048b96 <+554>: mov DWORD PTR [esp],0x4

0x08048b9d <+561>: call 0x8048870

0x08048ba2 <+566>: mov DWORD PTR [esp+0xc],0x0

0x08048baa <+574>: mov DWORD PTR [esp+0x8],0x10

0x08048bb2 <+582>: mov DWORD PTR [esp+0x4],0x12c

0x08048bba <+590>: mov DWORD PTR [esp],0x140

0x08048bc1 <+597>: call 0x8048780

...

0x08049ce0 <+4980>: cmp ax,0x13f

0x08049ce4 <+4984>: jle 0x8049c85

0x08049ce6 <+4986>: mov DWORD PTR [esp],0x3

0x08049ced <+4993>: call 0x8048830

0x08049cf2 <+4998>: mov eax,0x0

0x08049cf7 <+5003>: mov ebx,DWORD PTR [ebp-0x4]

0x08049cfa <+5006>: leave

0x08049cfb <+5007>: ret

We don't actually need the key to get here. Jumping to 0x08048b67 runs the SDL code:

(gdb) break main

Breakpoint 1 at 0x8048970

(gdb) run

Starting program: /home/elliptic/solved/bin2002/Game.bin

[Thread debugging using libthread_db enabled]

Using host libthread_db library "/lib/i386-linux-gnu/i686/cmov/libthread_db.so.1".

Breakpoint 1, 0x08048970 in main ()

(gdb) jump *0x08048b67

Continuing at 0x8048b67.

..spawning the window:

MCA-B17EC0D3

elliptic@elliptic:~/solved/bin2002$ ./Game.bin

Usage: ./Game.bin

keyFile - The file containing your CD Key

Strings table and cat were both uninteresting. Disassembling the main function in GDB yielded some nice things.

. Stack initialization

0x0804896c <+0>: push ebp

0x0804896d <+1>: mov ebp,esp

0x0804896f <+3>: push ebx

0x08048970 <+4>: and esp,0xfffffff0

0x08048973 <+7>: sub esp,0x170

. If fewer than 1 parameter, jump to 0x80489AC

0x08048979 <+13>: cmp DWORD PTR [ebp+0x8],0x1

0x0804897d <+17>: jg 0x80489ac

. Usage

0x0804897f <+19>: mov eax,DWORD PTR [ebp+0xc]

0x08048982 <+22>: mov eax,DWORD PTR [eax]

0x08048984 <+24>: mov DWORD PTR [esp+0x4],eax

0x08048988 <+28>: mov DWORD PTR [esp],0x8049d90 ; 0x8049d90 = "Usage: %s

0x0804898f <+35>: call 0x80487e0

0x08048994 <+40>: mov DWORD PTR [esp],0x8049da8 ; 0x8049da8 = "\nkeyFile - The file containing your CD Key"

0x0804899b <+47>: call 0x8048840

0x080489a0 <+52>: mov DWORD PTR [esp],0x0

0x080489a7 <+59>: call 0x8048870

. Body

0x080489ac <+64>: mov eax,DWORD PTR [ebp+0xc]

0x080489af <+67>: add eax,0x4

0x080489b2 <+70>: mov eax,DWORD PTR [eax]

0x080489b4 <+72>: mov DWORD PTR [esp+0x4],0x8049dd3 ; 0x8049dd3 = "rb"

0x080489bc <+80>: mov DWORD PTR [esp],eax

0x080489bf <+83>: call 0x80487d0

0x080489c4 <+88>: mov DWORD PTR [esp+0x160],eax

0x080489cb <+95>: cmp DWORD PTR [esp+0x160],0x0 ; Check if we can open the file

0x080489d3 <+103>: jne 0x80489ed

0x080489d5 <+105>: mov DWORD PTR [esp],0x8049dd8 ; 0x8049dd8 = "Error - couldn't open key file"

0x080489dc <+112>: call 0x8048790

0x080489e1 <+117>: mov DWORD PTR [esp],0x1

0x080489e8 <+124>: call 0x8048870

0x080489ed <+129>: mov eax,DWORD PTR [esp+0x160]

0x080489f4 <+136>: mov DWORD PTR [esp+0xc],eax ; file pointer

0x080489f8 <+140>: mov DWORD PTR [esp+0x8],0x1 ; number of elements in buffer (1)

0x08048a00 <+148>: mov DWORD PTR [esp+0x4],0x80 ; size of elements in buffer (0x80 = 128 bytes)

0x08048a08 <+156>: lea eax,[esp+0xb8]

0x08048a0f <+163>: mov DWORD PTR [esp],eax ; buffer

0x08048a12 <+166>: call 0x8048850

0x08048a17 <+171>: mov DWORD PTR [esp+0x15c],eax

0x08048a1e <+178>: cmp DWORD PTR [esp+0x15c],0x1 ; if successful (fread returns the number of elements successfully read)

0x08048a26 <+186>: je 0x8048a59

0x08048a28 <+188>: mov eax,ds:0x804a16c

0x08048a2d <+193>: mov DWORD PTR [esp+0xc],eax

0x08048a31 <+197>: mov DWORD PTR [esp+0x8],0x1f

0x08048a39 <+205>: mov DWORD PTR [esp+0x4],0x1

0x08048a41 <+213>: mov DWORD PTR [esp],0x8049df8 ; 0x8049df8 = "Error: couldn't read whole key\n"

0x08048a48 <+220>: call 0x80487f0

0x08048a4d <+225>: mov DWORD PTR [esp],0x2

0x08048a54 <+232>: call 0x8048870

Here, the program calls a bunch of really scary things like time and random, probably to compare against the key you enter. The correct key is 128 bytes of randomness? Probably not the key you're looking for.

0x08048a59 <+237>: mov DWORD PTR [esp],0x0

0x08048a60 <+244>: call 0x8048810

The more interesting part; why is it calling SDL functions? SDL is used for drawing.

0x08048b67 <+507>: mov DWORD PTR [esp],0x0

0x08048b6e <+514>: call 0x80487c0

0x08048b73 <+519>: test eax,eax

0x08048b75 <+521>: je 0x8048ba2

0x08048b77 <+523>: call 0x8048860

0x08048b7c <+528>: mov edx,DWORD PTR ds:0x804a16c

0x08048b82 <+534>: mov DWORD PTR [esp+0x8],eax

0x08048b86 <+538>: mov DWORD PTR [esp+0x4],0x8049e30

0x08048b8e <+546>: mov DWORD PTR [esp],edx

0x08048b91 <+549>: call 0x8048800

0x08048b96 <+554>: mov DWORD PTR [esp],0x4

0x08048b9d <+561>: call 0x8048870

0x08048ba2 <+566>: mov DWORD PTR [esp+0xc],0x0

0x08048baa <+574>: mov DWORD PTR [esp+0x8],0x10

0x08048bb2 <+582>: mov DWORD PTR [esp+0x4],0x12c

0x08048bba <+590>: mov DWORD PTR [esp],0x140

0x08048bc1 <+597>: call 0x8048780

...

0x08049ce0 <+4980>: cmp ax,0x13f

0x08049ce4 <+4984>: jle 0x8049c85

0x08049ce6 <+4986>: mov DWORD PTR [esp],0x3

0x08049ced <+4993>: call 0x8048830

0x08049cf2 <+4998>: mov eax,0x0

0x08049cf7 <+5003>: mov ebx,DWORD PTR [ebp-0x4]

0x08049cfa <+5006>: leave

0x08049cfb <+5007>: ret

We don't actually need the key to get here. Jumping to 0x08048b67 runs the SDL code:

(gdb) break main

Breakpoint 1 at 0x8048970

(gdb) run

Starting program: /home/elliptic/solved/bin2002/Game.bin

[Thread debugging using libthread_db enabled]

Using host libthread_db library "/lib/i386-linux-gnu/i686/cmov/libthread_db.so.1".

Breakpoint 1, 0x08048970 in main ()

(gdb) jump *0x08048b67

Continuing at 0x8048b67.

..spawning the window:

MCA-B17EC0D3

MITRE 2013 Writeup : Binary 200 #1

This one wasn't even a binary. It was a JAR file that you disassembled to yield the following:

// Decompiled by Jad v1.5.8e. Copyright 2001 Pavel Kouznetsov.

// Jad home page: http://www.geocities.com/kpdus/jad.html

// Decompiler options: packimports(3)

// Source File Name: Overrated.java

import java.io.PrintStream;

import java.security.MessageDigest;

import java.security.NoSuchAlgorithmException;

import java.util.Scanner;

public class Overrated

{

public Overrated()

{

}

public static void main(String args[])

{

String s = new String();

String s1 = "3d38629f056c942d561b63dbe8e94653";

Scanner scanner = new Scanner(System.in);

for(; !encrypt(s).equals(s1); s = scanner.nextLine())

System.out.print("Please enter the correct flag to continue: ");

System.out.println("Good job!");

}

public static String encrypt(String s)

{

String s1 = "";

try

{

MessageDigest messagedigest = MessageDigest.getInstance("MD5");

messagedigest.reset();

messagedigest.update(s.getBytes());

byte abyte0[] = messagedigest.digest();

String s2 = "";

for(int i = 0; i < abyte0.length; i++)

{

String s3 = Integer.toHexString(0xff & abyte0[i]);

if(s3.length() == 1)

s1 = (new StringBuilder()).append(s1).append("0").append(s3).toString();

else

s1 = (new StringBuilder()).append(s1).append(s3).toString();

}

}

catch(NoSuchAlgorithmException nosuchalgorithmexception) { }

return s1;

}

}

32-byte MD5. Pull up your favorite hash cracker and set it up for an 8-byte bruteforce salted with MCA- and with the character set 0123456789ABCDEF:

elliptic@elliptic:~/hashcat-0.46$ ./hashcat-cli32.bin -m20 -a3 --custom-charset1=?dABCDEF --pw-min=8 --pw-max=8 hash.txt ?1?1?1?1?1?1?1?1

Initializing hashcat v0.46 by atom with 8 threads and 32mb segment-size...

Added hashes from file hash.txt: 1 (1 salts)

Activating quick-digest mode for single-hash with salt

NOTE: press enter for status-screen

3d38629f056c942d561b63dbe8e94653:MCA-:8EC28E12

All hashes have been recovered

Input.Mode: Mask (?1?1?1?1?1?1?1?1)

Index.....: 0/1 (segment), 4294967296 (words), 0 (bytes)

Recovered.: 1/1 hashes, 1/1 salts

Speed/sec.: - plains, 10.39M words

Progress..: 254333816/4294967296 (5.92%)

Running...: 00:00:00:24

Estimated.: 00:00:06:28

Started: Mon Jul 29 21:41:45 2013

Stopped: Mon Jul 29 21:42:09 2013

elliptic@elliptic:~/hashcat-0.46$

MCA-8EC28E12

// Decompiled by Jad v1.5.8e. Copyright 2001 Pavel Kouznetsov.

// Jad home page: http://www.geocities.com/kpdus/jad.html

// Decompiler options: packimports(3)

// Source File Name: Overrated.java

import java.io.PrintStream;

import java.security.MessageDigest;

import java.security.NoSuchAlgorithmException;

import java.util.Scanner;

public class Overrated

{

public Overrated()

{

}

public static void main(String args[])

{

String s = new String();

String s1 = "3d38629f056c942d561b63dbe8e94653";

Scanner scanner = new Scanner(System.in);

for(; !encrypt(s).equals(s1); s = scanner.nextLine())

System.out.print("Please enter the correct flag to continue: ");

System.out.println("Good job!");

}

public static String encrypt(String s)

{

String s1 = "";

try

{

MessageDigest messagedigest = MessageDigest.getInstance("MD5");

messagedigest.reset();

messagedigest.update(s.getBytes());

byte abyte0[] = messagedigest.digest();

String s2 = "";

for(int i = 0; i < abyte0.length; i++)

{

String s3 = Integer.toHexString(0xff & abyte0[i]);

if(s3.length() == 1)

s1 = (new StringBuilder()).append(s1).append("0").append(s3).toString();

else

s1 = (new StringBuilder()).append(s1).append(s3).toString();

}

}

catch(NoSuchAlgorithmException nosuchalgorithmexception) { }

return s1;

}

}

32-byte MD5. Pull up your favorite hash cracker and set it up for an 8-byte bruteforce salted with MCA- and with the character set 0123456789ABCDEF:

elliptic@elliptic:~/hashcat-0.46$ ./hashcat-cli32.bin -m20 -a3 --custom-charset1=?dABCDEF --pw-min=8 --pw-max=8 hash.txt ?1?1?1?1?1?1?1?1

Initializing hashcat v0.46 by atom with 8 threads and 32mb segment-size...

Added hashes from file hash.txt: 1 (1 salts)

Activating quick-digest mode for single-hash with salt

NOTE: press enter for status-screen

3d38629f056c942d561b63dbe8e94653:MCA-:8EC28E12

All hashes have been recovered

Input.Mode: Mask (?1?1?1?1?1?1?1?1)

Index.....: 0/1 (segment), 4294967296 (words), 0 (bytes)

Recovered.: 1/1 hashes, 1/1 salts

Speed/sec.: - plains, 10.39M words

Progress..: 254333816/4294967296 (5.92%)

Running...: 00:00:00:24

Estimated.: 00:00:06:28

Started: Mon Jul 29 21:41:45 2013

Stopped: Mon Jul 29 21:42:09 2013

elliptic@elliptic:~/hashcat-0.46$

MCA-8EC28E12

MITRE 2013 Writeup : Binary 100

This one was actually broken and unsolvable for some time. It was a Linux executable that displayed the following:

elliptic@elliptic:~/solved/bin100$ ./hello

Hello, World!

Don't forget the dash

Wow. Uninformative. Let's open it in GDB:

elliptic@elliptic:~/solved/bin100$ gdb --quiet hello

Reading symbols from /home/elliptic/solved/bin100/hello...(no debugging symbols found)...done.

(gdb) info fun

All defined functions:

Non-debugging symbols:

0x080482b8 _init

0x080482f0 puts

0x080482f0 puts@plt

0x08048300 __gmon_start__

0x08048300 __gmon_start__@plt

0x08048310 __libc_start_main

0x08048310 __libc_start_main@plt

0x08048320 _start

0x08048350 deregister_tm_clones

0x08048380 register_tm_clones

0x080483c0 __do_global_dtors_aux

0x080483e0 frame_dummy

0x0804840c main

0x08048428 MCA02076b3d

0x08048450 __libc_csu_fini

0x08048460 __libc_csu_init

0x080484ba __i686.get_pc_thunk.bx

0x080484c0 _fini

(gdb)

A basic look at the function list gives the key outright. MCA-02076B3D

elliptic@elliptic:~/solved/bin100$ ./hello

Hello, World!

Don't forget the dash

Wow. Uninformative. Let's open it in GDB:

elliptic@elliptic:~/solved/bin100$ gdb --quiet hello

Reading symbols from /home/elliptic/solved/bin100/hello...(no debugging symbols found)...done.

(gdb) info fun

All defined functions:

Non-debugging symbols:

0x080482b8 _init

0x080482f0 puts

0x080482f0 puts@plt

0x08048300 __gmon_start__

0x08048300 __gmon_start__@plt

0x08048310 __libc_start_main

0x08048310 __libc_start_main@plt

0x08048320 _start

0x08048350 deregister_tm_clones

0x08048380 register_tm_clones

0x080483c0 __do_global_dtors_aux

0x080483e0 frame_dummy

0x0804840c main

0x08048428 MCA02076b3d

0x08048450 __libc_csu_fini

0x08048460 __libc_csu_init

0x080484ba __i686.get_pc_thunk.bx

0x080484c0 _fini

(gdb)

A basic look at the function list gives the key outright. MCA-02076B3D

MITRE 2013 Writeup : Cryptography 100

Easy one. File came in the form of a text file:

qnfzvyxfunxrolxrivaynjirezlzvyxfunxroevatfnyyguro

blfgbgurlnequnafcbvagrqqbjagurfgerrgnggurfznyyteb

hcbslbhatzraurnqvatgurvejnltrfghevatjvguuvfpubpby

ngrfunxrjungqnaybbxrqhcsebzuvfynjazbjrenaqjbaqrer

qjulunafxrcgznxvatzvyxfunxrfnaqgurapbzvatgbuvflne

qjvgubhgoevatvatuvzbarpurpxbhgzlzvyxfunxrrrgfoeva

tvatnyygubfroblfgbzllnequnafgubfrneragoblfgurlerg

urlerabgoblfinggurleryvggyrtveyffnlvatgurleryvxrv

gforggregunalbhefchgbalbhetynffrfunafgurlergbbfyb

jgborlbhatzranggenpgrqolnsebmraqnvelonfrqgerngnaq

gurlerzhggrevatfbzrguvatvguvaxgurlzvtugorbhgsbezb

ergunalbhezvyxfunxrcnyinglbhguvaxgurlzvtugorserap

ugursyntvfzpnfrirakfvkkfvkkavarkfvkkfrirakfvkksvi

rkehavguvaxgurlermbzovrfohgmbzovrfqbagyvxrzvyxfun

xrfgurlernyyynpgbfrvagbyrenagiurernerlbhtbvatinvg

invgsbezrqbagyrggurztrgzlzvyxfunxr

Frequency analysis:

r (114) occurs 11.573472041612484 %

g (103) occurs 9.622886866059818 %

v (118) occurs 7.022106631989597 %

n (110) occurs 6.892067620286085 %

u (117) occurs 6.892067620286085 %

a (97) occurs 6.631989596879063 %

b (98) occurs 6.501950585175553 %

f (102) occurs 6.371911573472042 %

e (101) occurs 5.3315994798439535 %

l (108) occurs 4.4213263979193757 %

y (121) occurs 4.031209362808843 %

z (122) occurs 3.77113133940182 %

x (120) occurs 3.2509752925877766 %

t (116) occurs 3.1209362808842653 %

q (113) occurs 2.47074122236671 %

h (104) occurs 2.2106631989596878 %

o (111) occurs 2.2106631989596878 %

k (107) occurs 1.5604681404421327 %

j (106) occurs 1.4304291287386216 %

i (105) occurs 1.3003901170351105 %

p (112) occurs 1.1703511053315995 %

s (115) occurs 1.0403120936280884 %

c (99) occurs 0.7802340702210663 %

m (109) occurs 0.39011703511053317 %

The most common letters are r at 11.5% and g at 9.6%. "Hey," says you, "that looks like the standard frequency table!) So it's probably a substitution cipher. Even better, it's a Caesar cipher.

Rotated 13 letters forward:

DASMILKSHAKEBYKEVINLAWVERMYMILKSHAKEBRINGSALLTHEBOYSTO

THEYARDHANSPOINTEDDOWNTHESTREETATTHESMALLGROUPOFYOUNGM

ENHEADINGTHEIRWAYGESTURINGWITHHISCHOCOLATESHAKEWHATDAN

LOOKEDUPFROMHISLAWNMOWERANDWONDEREDWHYHANSKEPTMAKINGMI

LKSHAKESANDTHENCOMINGTOHISYARDWITHOUTBRINGINGHIMONECHE

CKOUTMYMILKSHAKEEETSBRINGINGALLTHOSEBOYSTOMYYARDHANSTH

OSEARENTBOYSTHEYRETHEYRENOTBOYSVATTHEYRELITTLEGIRLSSAY

INGTHEYRELIKEITSBETTERTHANYOURSPUTONYOURGLASSESHANSTHE

YRETOOSLOWTOBEYOUNGMENATTRACTEDBYAFROZENDAIRYBASEDTREA

TANDTHEYREMUTTERINGSOMETHINGITHINKTHEYMIGHTBEOUTFORMOR

ETHANYOURMILKSHAKEPALVATYOUTHINKTHEYMIGHTBEFRENCHTHEFL

AGISMCASEVENXSIXXSIXXNINEXSIXXSEVENXSIXXFIVEXRUNITHINK

THEYREZOMBIESBUTZOMBIESDONTLIKEMILKSHAKESTHEYREALLLACT

OSEINTOLERANTVHEREAREYOUGOINGVAITVAITFORMEDONTLETTHEMG

ETMYMILKSHAKE

MCA-76696765. Very simple; took us (and most of the teams) a few minutes to solve.

qnfzvyxfunxrolxrivaynjirezlzvyxfunxroevatfnyyguro

blfgbgurlnequnafcbvagrqqbjagurfgerrgnggurfznyyteb

hcbslbhatzraurnqvatgurvejnltrfghevatjvguuvfpubpby

ngrfunxrjungqnaybbxrqhcsebzuvfynjazbjrenaqjbaqrer

qjulunafxrcgznxvatzvyxfunxrfnaqgurapbzvatgbuvflne

qjvgubhgoevatvatuvzbarpurpxbhgzlzvyxfunxrrrgfoeva

tvatnyygubfroblfgbzllnequnafgubfrneragoblfgurlerg

urlerabgoblfinggurleryvggyrtveyffnlvatgurleryvxrv

gforggregunalbhefchgbalbhetynffrfunafgurlergbbfyb

jgborlbhatzranggenpgrqolnsebmraqnvelonfrqgerngnaq

gurlerzhggrevatfbzrguvatvguvaxgurlzvtugorbhgsbezb

ergunalbhezvyxfunxrcnyinglbhguvaxgurlzvtugorserap

ugursyntvfzpnfrirakfvkkfvkkavarkfvkkfrirakfvkksvi

rkehavguvaxgurlermbzovrfohgmbzovrfqbagyvxrzvyxfun

xrfgurlernyyynpgbfrvagbyrenagiurernerlbhtbvatinvg

invgsbezrqbagyrggurztrgzlzvyxfunxr

Frequency analysis:

r (114) occurs 11.573472041612484 %

g (103) occurs 9.622886866059818 %

v (118) occurs 7.022106631989597 %

n (110) occurs 6.892067620286085 %

u (117) occurs 6.892067620286085 %

a (97) occurs 6.631989596879063 %

b (98) occurs 6.501950585175553 %

f (102) occurs 6.371911573472042 %

e (101) occurs 5.3315994798439535 %

l (108) occurs 4.4213263979193757 %

y (121) occurs 4.031209362808843 %

z (122) occurs 3.77113133940182 %

x (120) occurs 3.2509752925877766 %

t (116) occurs 3.1209362808842653 %

q (113) occurs 2.47074122236671 %

h (104) occurs 2.2106631989596878 %

o (111) occurs 2.2106631989596878 %

k (107) occurs 1.5604681404421327 %

j (106) occurs 1.4304291287386216 %

i (105) occurs 1.3003901170351105 %

p (112) occurs 1.1703511053315995 %

s (115) occurs 1.0403120936280884 %

c (99) occurs 0.7802340702210663 %

m (109) occurs 0.39011703511053317 %

The most common letters are r at 11.5% and g at 9.6%. "Hey," says you, "that looks like the standard frequency table!) So it's probably a substitution cipher. Even better, it's a Caesar cipher.

Rotated 13 letters forward:

DASMILKSHAKEBYKEVINLAWVERMYMILKSHAKEBRINGSALLTHEBOYSTO

THEYARDHANSPOINTEDDOWNTHESTREETATTHESMALLGROUPOFYOUNGM

ENHEADINGTHEIRWAYGESTURINGWITHHISCHOCOLATESHAKEWHATDAN

LOOKEDUPFROMHISLAWNMOWERANDWONDEREDWHYHANSKEPTMAKINGMI

LKSHAKESANDTHENCOMINGTOHISYARDWITHOUTBRINGINGHIMONECHE

CKOUTMYMILKSHAKEEETSBRINGINGALLTHOSEBOYSTOMYYARDHANSTH

OSEARENTBOYSTHEYRETHEYRENOTBOYSVATTHEYRELITTLEGIRLSSAY

INGTHEYRELIKEITSBETTERTHANYOURSPUTONYOURGLASSESHANSTHE

YRETOOSLOWTOBEYOUNGMENATTRACTEDBYAFROZENDAIRYBASEDTREA

TANDTHEYREMUTTERINGSOMETHINGITHINKTHEYMIGHTBEOUTFORMOR

ETHANYOURMILKSHAKEPALVATYOUTHINKTHEYMIGHTBEFRENCHTHEFL

AGISMCASEVENXSIXXSIXXNINEXSIXXSEVENXSIXXFIVEXRUNITHINK

THEYREZOMBIESBUTZOMBIESDONTLIKEMILKSHAKESTHEYREALLLACT

OSEINTOLERANTVHEREAREYOUGOINGVAITVAITFORMEDONTLETTHEMG

ETMYMILKSHAKE

MCA-76696765. Very simple; took us (and most of the teams) a few minutes to solve.

MITRE 2013 Writeup : Cryptography 200

Cryptography 200 was an encrypted text file. At first glance it's not hard to notice that some patterns repeat:

zhdgduknsardyoeyhdxlnikgullksaeshxaqzhtxcntamjoxrehgsbgncglhtbnnel

oahotgkrdjhdgddjldggtkihoabgsdufhjemziseiuzhdhoriolheuglkkylesskrx

btgkfhbenxammeooprbisnelgnvotgzhdzwhytdjlhvvhotgkacbemzuqkoezhdhlt

kczxbttckkvhoisnezjvdtttxenltgksokcjrechamjiwzhdgduknsard

which suggests a repeating encryption pattern of some sort (short, repeating XOR cipher turned out to not be the case). The text is also rather long, suggesting that frequency analysis is possible.

Frequency analysis:

n (110) occurs 7.04927334838747 %

t (116) occurs 6.216663771640322 %

s (115) occurs 6.035319321405825 %

k (107) occurs 5.705749522320653 %

z (122) occurs 5.688147762144635 %

g (103) occurs 5.573273116785363 %

d (100) occurs 5.568177870418621 %

e (101) occurs 5.521162642580047 %

o (111) occurs 5.445428753401656 %

r (114) occurs 5.301140640379828 %

h (104) occurs 5.066759307509698 %

a (97) occurs 4.228822882288229 %

u (117) occurs 3.9571536100978517 %

m (109) occurs 3.7324995657460485 %

i (105) occurs 3.145619825140409 %

c (99) occurs 3.0988361994094147 %

l (108) occurs 2.954548086387586 %

y (121) occurs 2.8218400787447164 %

x (120) occurs 2.638642811649586 %

q (113) occurs 2.2442244224422443 %

v (118) occurs 1.7567019859880726 %

j (106) occurs 1.7418794511030051 %

b (98) occurs 1.6527126396850211 %

f (102) occurs 1.3185107984482658 %

w (119) occurs 0.9365989230501998 %

p (112) occurs 0.6003126628452319 %

Comparison with an actual frequency list doesn't yield any results (and substituting the letters in by frequency yields gibberish).

It turns out there's a way to solve Vigenère ciphers by finding repetitions in the text and retrieving the number of letters between them. Vigenère ciphers are essentially interlaced Caesar ciphers. Ex:

Key: abcd

Message: mexicandanceparty

Extended Key: abcdabcdabcdabcda

Encrypted Msg: mfzlcbpgaoehpbtwy

where each letter of the message is added to that of the key (A = 0, B = 1, etc., so mexicandanceparty + abcdabcdabcdabcda = m, mexicandanceparty + abcdabcdabcdabcda = f, and so on).

The weakness in a Vigenère cipher is that since the key is repeated for the length of the message, if your message has repeated words, there's a chance that the encrypted message will have repetitions as well. Ex:

Key: abcd

Message: whatyouseeiswhatyouget

Extended Key: abcdabcdabcdabcdabcdab

Encrypted Msg: wicwypwvefkvwicwypwjeu

There's 12 letters from the above example between the first letters of the words (wicwypwvefkv = 12 letters) so we know that since the key repeats, the length of the key must be a factor of this (a length of 1, 2, 3, 4, 6, or 12). With enough repetitions, you can figure out the length of the key. And using a dictionary of words, it's possible to algorithmically determine what the key is, similar to how you'd solve a cryptogram.

This is all ~ super great ~ because not only does our text have repetitions but it is ridiculously long and fits both requirements for a solvable Vigenère cipher. Feeding it through this lazy person cipher solver yields the key (gaz) and the decrypted text:

theadventuresofsherlockholmesbysirarthurconandoyleiascand

alinbohemiaiitheredheadedleagueiiiacaseofidentityivthebos

combevalleymysteryvthefiveorangepipsvithemanwiththetwiste

dlipviitheadventureofthebluecarbuncleviiitheadventureofth

especkledbandixtheadventureoftheengineersthumbxtheadventu

reofthenoblebachelorxitheadventureoftheberylcoronetxiithe

adventureofthecopperbeechesadventureiascandalinbohemiaito

sherlockholmessheisalwaysthewomanihaveseldomheardhimmenti

onherunderanyothernameinhiseyessheeclipsesandpredominates

thewholeofhersexitwasnotthathefeltanyemotionakintolovefor

ireneadlerallemotionsandthatoneparticularlywereabhorrentt

ohiscoldprecisebutadmirablybalancedmindhewasitakeitthemos

tperfectreasoningandobservingmachinethattheworldhasseenbu

tasaloverhewouldhaveplacedhimselfinafalsepositionhenevers

pokeoft..................................................

............et cetera et cetera .........................

...theflagismcafivezerofivetwofourefourseven.............

MCA-50524E47

zhdgduknsardyoeyhdxlnikgullksaeshxaqzhtxcntamjoxrehgsbgncglhtbnnel

oahotgkrdjhdgddjldggtkihoabgsdufhjemziseiuzhdhoriolheuglkkylesskrx

btgkfhbenxammeooprbisnelgnvotgzhdzwhytdjlhvvhotgkacbemzuqkoezhdhlt

kczxbttckkvhoisnezjvdtttxenltgksokcjrechamjiwzhdgduknsard

which suggests a repeating encryption pattern of some sort (short, repeating XOR cipher turned out to not be the case). The text is also rather long, suggesting that frequency analysis is possible.

Frequency analysis:

n (110) occurs 7.04927334838747 %

t (116) occurs 6.216663771640322 %

s (115) occurs 6.035319321405825 %

k (107) occurs 5.705749522320653 %

z (122) occurs 5.688147762144635 %

g (103) occurs 5.573273116785363 %

d (100) occurs 5.568177870418621 %

e (101) occurs 5.521162642580047 %

o (111) occurs 5.445428753401656 %

r (114) occurs 5.301140640379828 %

h (104) occurs 5.066759307509698 %

a (97) occurs 4.228822882288229 %

u (117) occurs 3.9571536100978517 %

m (109) occurs 3.7324995657460485 %

i (105) occurs 3.145619825140409 %

c (99) occurs 3.0988361994094147 %

l (108) occurs 2.954548086387586 %

y (121) occurs 2.8218400787447164 %

x (120) occurs 2.638642811649586 %

q (113) occurs 2.2442244224422443 %

v (118) occurs 1.7567019859880726 %

j (106) occurs 1.7418794511030051 %

b (98) occurs 1.6527126396850211 %

f (102) occurs 1.3185107984482658 %

w (119) occurs 0.9365989230501998 %

p (112) occurs 0.6003126628452319 %

Comparison with an actual frequency list doesn't yield any results (and substituting the letters in by frequency yields gibberish).

It turns out there's a way to solve Vigenère ciphers by finding repetitions in the text and retrieving the number of letters between them. Vigenère ciphers are essentially interlaced Caesar ciphers. Ex:

Key: abcd

Message: mexicandanceparty

Extended Key: abcdabcdabcdabcda

Encrypted Msg: mfzlcbpgaoehpbtwy

where each letter of the message is added to that of the key (A = 0, B = 1, etc., so mexicandanceparty + abcdabcdabcdabcda = m, mexicandanceparty + abcdabcdabcdabcda = f, and so on).

The weakness in a Vigenère cipher is that since the key is repeated for the length of the message, if your message has repeated words, there's a chance that the encrypted message will have repetitions as well. Ex:

Key: abcd

Message: whatyouseeiswhatyouget

Extended Key: abcdabcdabcdabcdabcdab

Encrypted Msg: wicwypwvefkvwicwypwjeu

There's 12 letters from the above example between the first letters of the words (wicwypwvefkv = 12 letters) so we know that since the key repeats, the length of the key must be a factor of this (a length of 1, 2, 3, 4, 6, or 12). With enough repetitions, you can figure out the length of the key. And using a dictionary of words, it's possible to algorithmically determine what the key is, similar to how you'd solve a cryptogram.

This is all ~ super great ~ because not only does our text have repetitions but it is ridiculously long and fits both requirements for a solvable Vigenère cipher. Feeding it through this lazy person cipher solver yields the key (gaz) and the decrypted text:

theadventuresofsherlockholmesbysirarthurconandoyleiascand

alinbohemiaiitheredheadedleagueiiiacaseofidentityivthebos

combevalleymysteryvthefiveorangepipsvithemanwiththetwiste

dlipviitheadventureofthebluecarbuncleviiitheadventureofth

especkledbandixtheadventureoftheengineersthumbxtheadventu

reofthenoblebachelorxitheadventureoftheberylcoronetxiithe

adventureofthecopperbeechesadventureiascandalinbohemiaito

sherlockholmessheisalwaysthewomanihaveseldomheardhimmenti

onherunderanyothernameinhiseyessheeclipsesandpredominates

thewholeofhersexitwasnotthathefeltanyemotionakintolovefor

ireneadlerallemotionsandthatoneparticularlywereabhorrentt

ohiscoldprecisebutadmirablybalancedmindhewasitakeitthemos

tperfectreasoningandobservingmachinethattheworldhasseenbu

tasaloverhewouldhaveplacedhimselfinafalsepositionhenevers

pokeoft..................................................

............et cetera et cetera .........................

...theflagismcafivezerofivetwofourefourseven.............

MCA-50524E47

MITRE 24-Hour CTF

So I did this MITRE 24-hour CTF with Vincent, the president of the hacking club over at the University of Florida, along with two other fellows drjmoo and TobalJackson (also at UF) under the team flag SomethingEpic and landed 12th place. That was pretty cool. I crashed for 24 hours at Vincent's house and drjmoo and TobalJackson crashed over at UF and fate twisted our way, landing us five places above Georgia Tech and its many MadHatter clones (and only four places down from the Berkeley team, no less).

For the uninitiated, a CTF (capture-the-flag) is a hacking competition where there are certain puzzles in any form, be it an image, or a virtual disk, or an executable or what-say-you, and the idea is to recover some flag cleverly hidden inside of it. The topics this time around were:

Binary: digging through executable files

Cryptography: encoded files

Forensics: partially-damaged or unaccessible files

Grab Bag: anything/everything

Networking: ..networking

Web: ..web

Flags were in the format MCA-{8 digits of hex}

Writeups of a few:

Binary 100

Binary 200 #1

Binary 200 #2

Cryptography 100

Cryptography 200

Networking 200

|

| Hey look it's us! |

For the uninitiated, a CTF (capture-the-flag) is a hacking competition where there are certain puzzles in any form, be it an image, or a virtual disk, or an executable or what-say-you, and the idea is to recover some flag cleverly hidden inside of it. The topics this time around were:

Binary: digging through executable files

Cryptography: encoded files

Forensics: partially-damaged or unaccessible files

Grab Bag: anything/everything

Networking: ..networking

Web: ..web

Flags were in the format MCA-{8 digits of hex}

Writeups of a few:

Binary 100

Binary 200 #1

Binary 200 #2

Cryptography 100

Cryptography 200

Networking 200

Sunday, June 16, 2013

DEFCON Quals

So my friend Vincent referred me to this quaint little convention called

DEFCON

and more importantly the capture-the-flag competition they were hosting, colorfully titled the Legitimate Business Syndicate Casualty Trauma Festival (their domain was LegitBS.net) . Figured it would be nice, given that it's a hacking competition and I haven't done that in a while, so I joined under the banner SSHv3 with Vincent and two states universities in Florida.

It was relatively nice starting -- Vincent let me know that he had a stash of junk food and coffee if needed (a warning which I unwisely waved off) -- and then it started becoming terrible when the first question opened up.

Nigel was invited to it with us but he stayed home. We set up a webcam session for him to participate in proxy. But after glancing at the first question he effectively gave up and began using the webcam session to broadcast 4th grade video projects and to overlay his face with a picture of a cat.

After three hours no one had solved a thing yet and the organizers decided to open up another question while simultaneously insulting our heritage. We figured out pretty quickly that it was a sliding puzzle and managed to come up with some innovative ideas. My approach was to generate every possible configuration (9! / 2 = 181440 possibilities) and their solutions, store them on disk, and then just do a lookup when it was necessary (the puzzle was timed). Eventually, when it was taking too long (this was around 2 AM) we collectively decided to throw out my approach and do something sensible. I offered to modify it to accept any state already found as a goal state and just append the solution, which worked a bit better but not enough.

The program had stored nearly 3000 solutions by now and it was solving them relatively quickly but there were a few that were troublesome. We'd both gone through nearly two cups of coffee around now. Vincent eventually started looking up his own algorithms when it became clear I was too stubborn to accept that my horribly inefficient bruteforce method was awful.

He found a Java program that did it relatively fast and we began hacking through the code for it.

Around this time, he pointed out that the sun was coming up.

I was ready to kill something by this point. The scoreboard's theme -- a flashy, pink, hacking syndicate posing as a Japanese business -- grew from "mildly humorous" to "absolutely mocking." I downed another coffee.

We were both completely dead, and I was relegated to running the code every time it failed in the hopes that we'd get lucky with really easy slider configurations, when suddenly a line that wasn't a slider puzzle appeared at the bottom of the window:

"The key is enemas on parade."

After 10 hours we have contributed our first and only point to the team.

The people at the universities fared better; they managed a nice 17 points by the end of it (plus our one makes 18) and actually got some sleep.

It was not bad.

Tuesday, June 11, 2013

Science vs. Religion is a broken debate

It's not broken, mind you, but the important question got distorted.

Above is a video of Richard Feynman explaining his take on science in comparison to religion. I'm probably biased, because I respect the guy a ton and he's one of my biggest role models, but what he says in it is exactly correct. I'm stealing his thoughts in this post because I never really found a good way to state my frustration with the theism debate until now.

The current debate, relegated largely to frustrated forums, relay chats, and Reddit, deals with two frameworks.

One, from the side of theism, is that God is the ultimate truth and in order to see that, you must be engaged in the ritual to become closer to God (worship, prayer, what have you). Through miracles, or emotional connections, you will eventually become so close to God that it no longer matters that the scientific grounding is loose because it is not the logical understanding of the nature of the universe that matters but the emotional connection, simply because religion was clearly not made for making structure in the chaos of nature but for emotional and social guidance. People worship religions because it helps mold their behavior and not because it explains why the Earth orbits around the Sun. I don't agree with this view because I don't believe in compromising logic for emotional convenience but I understand why people would believe in it.

The second (more muddled side) is atheism, which I'm going to define here as the solid belief that no God exists. There are many confused variants of it, especially with agnosticism (which claims that the existence/non-existence of a deity is by definition unknowable) and religious skepticism (which claims that one is unbelieving in a certain deity until further evidence gives significant doubt otherwise). In this framework, atheism is an assertion in its own right; it is the assertion that "no god, or at least none of the gods proposed by currently-standing religions, exist in our universe," as opposed to the theistic belief that a god does exist. And this is fine, albeit ugly.

My problem with this is when atheists claim it is against the spirit of the scientific method to believe in a God because it shows that they do not understand the scientific method at all. It is impossible to understand the whole of the universe simply because there is no way to verify that one's knowledge of the universe is complete. Even if all of the proposed questions have been answered, there is no way to verify with 100% certainty that those answers are true. One can only make progress if they accept that certain assumptions will have to be taken for granted; we can only proceed with logic once we assume that our understanding of logic is correct. Of course, it's unscientific to simply assume something is correct, but we do so because it is the only means we have of making progress.

Since we cannot understand the whole of the universe, it is reasonable to say that the importance of science is not to find the end result of our pursuit for knowledge. (You could argue that the "end result of science" -- that is, modern science -- has a lot of practical applications that benefit mankind, but if it wasn't clear by now I'm speaking from a theoretical point-of-view and not a practical one). If it isn't about reaching the end, then the only possible explanation for the purpose of science, by process of elimination, is that science is about the pursuit itself. Scientists don't look for the answer to reality, but rather they pose questions about reality and attempt to make structure out of them based on some precepts that they have assumed to be true as a baseline. This process, called the scientific method, is useful because it creates more structure out of what we have.

It serves no purpose for science whatsoever to assume the end result that "there is no God." What benefit does that have for science? Will we be able to solve the absence of dark matter from the non-existence of God? Will we find the relationship with gravity to the electromagnetic force because we accept that there is no God? You could argue that, yes, it would help us pose such questions in the first place. But given that the scientific community already poses those questions, it does not help us at all.

Theism, at least, has some purpose, as the proof of a deity would dramatically alter our understanding of science, and create new structure. Theists, however, have a bad habit of claiming that structure is not necessary as long as you have faith, and that's not particularly helpful either.

If you didn't get any of that:

- Science exists so we can understand more science.

- Accepting certain assertions in science help us create structure so we can understand more science.

- The assertion that God does not exist does not help us create structure apart from denying the structures proposed by theism.

- Therefore, the proposal that "there is no God" is more scientific than "what if there is a God?" is completely bogus because the former has no potential to create structure while the latter has does.

- That being said, the convenient theistic response of "there is a God, and all of your questions will be answered through your ambiguous faith in God" also has no potential to create structure and is equally useless to science.

Two cents.

Saturday, June 8, 2013

Let's Talk About Etymology

I really like etymology. Let's talk about etymology! Quoth wiki:

Etymology is the study of the history of words, their origins, and how their form and meaning have changed over time. By an extension, the term "the etymology of [a word]" means the origin of the particular word.

For languages with a long written history, etymologists make use of texts in these languages and texts about the languages to gather knowledge about how words were used during earlier periods of their history and when they entered the languages in question. Etymologists also apply the methods of comparative linguistics to reconstruct information about languages that are too old for any direct information to be available.

By analyzing related languages with a technique known as the comparative method, linguists can make inferences about their shared parent language and its vocabulary. In this way, word roots have been found that can be traced all the way back to the origin of, for instance, the Indo-European language family.

Even though etymological research originally grew from the philological tradition, currently much etymological research is done on language families where little or no early documentation is available, such as Uralic and Austronesian.

I might be working on a game for Bacon Game Jam 05 but be quiet about this.

EDIT: Just kidding! I hate programming.

Etymological theory recognizes that words originate through a limited number of basic mechanisms, the most important of which are borrowing (i.e., the adoption of "loanwords" from other languages); word formation such as derivation and compounding; and onomatopoeia and sound symbolism, (i.e., the creation of imitative words such as "click").

While the origin of newly emerged words is often more or less transparent, it tends to become obscured through time due to sound change or semantic change. Due to sound change, it is not readily obvious that the English word set is related to the word sit (the former is originally a causative formation of the latter). It is even less obvious that bless is related to blood (the former was originally a derivative with the meaning "to mark with blood").

Semantic change may also occur. For example, the English word bead originally meant "prayer". It acquired its modern meaning through the practice of counting the recitation of prayers by using beads.

Etymology is the study of the history of words, their origins, and how their form and meaning have changed over time. By an extension, the term "the etymology of [a word]" means the origin of the particular word.

For languages with a long written history, etymologists make use of texts in these languages and texts about the languages to gather knowledge about how words were used during earlier periods of their history and when they entered the languages in question. Etymologists also apply the methods of comparative linguistics to reconstruct information about languages that are too old for any direct information to be available.

By analyzing related languages with a technique known as the comparative method, linguists can make inferences about their shared parent language and its vocabulary. In this way, word roots have been found that can be traced all the way back to the origin of, for instance, the Indo-European language family.

Even though etymological research originally grew from the philological tradition, currently much etymological research is done on language families where little or no early documentation is available, such as Uralic and Austronesian.

I might be working on a game for Bacon Game Jam 05 but be quiet about this.

EDIT: Just kidding! I hate programming.

Etymological theory recognizes that words originate through a limited number of basic mechanisms, the most important of which are borrowing (i.e., the adoption of "loanwords" from other languages); word formation such as derivation and compounding; and onomatopoeia and sound symbolism, (i.e., the creation of imitative words such as "click").

While the origin of newly emerged words is often more or less transparent, it tends to become obscured through time due to sound change or semantic change. Due to sound change, it is not readily obvious that the English word set is related to the word sit (the former is originally a causative formation of the latter). It is even less obvious that bless is related to blood (the former was originally a derivative with the meaning "to mark with blood").

Semantic change may also occur. For example, the English word bead originally meant "prayer". It acquired its modern meaning through the practice of counting the recitation of prayers by using beads.

Tuesday, May 21, 2013

Aussie Puzzling Spectaculare

SPOILERS: If you're interested in solving some of the puzzles here then don't read this; I clue in some of the answers.

--

There are two essential truths to the universe.

The first is that the jelly will never spread evenly on your toast no matter how hard you try.

The second is that everything becomes more interesting during exam week. Including Australian puzzle hunts. Yes, me and a collective of eight or nine friends decided to take on the Melbourne University Mathematics and Statistics Society's Annual Puzzle Hunt.

This was, needless to say, a very bad idea.

The puzzles are extremely difficult, each in a different way. Look at any of them and you'll understand why. You have no idea what you're looking for. There are no prompts, no questions. You have to notice that each wheel has 26 spokes and infer that each contains a letter of the English alphabet. Or that certain Chinese letters read out as English words when rotated counterclockwise. Last year, myself and a team of six managed to solved a total of zero puzzles.

Zero out of twenty.

Despite this, I convinced a bunch of people to participate and we (somewhat begrudgingly) assembled an odd team of some nine people. Given last year's brilliant score, I wasn't particularly optimistic.

We wrecked. We solved ten out of twenty puzzles, on par with the MIT team, and landed 54th place out of 219 teams.

I had known previously that a lot of those in my group were rather smart but it wasn't ever as apparent as during the puzzlehunt. One guy identified eight buildings based on their overhead street maps. Two others programmed bruteforce algorithms for a network. Another guy converted 2D animations of what he described as "pokemon MRI's" and created full 3D models out of them. Yet another recognized what sort of looked to me like a barcode to be an abstract rendition of Whistler's Mother. Same guy took what we had of a country code (an I and a P?) and correctly guessed "Swedish Meatballs" five minutes before the deadline.

All in all a really excellent experience, and I'd encourage anyone to participate.

--

There are two essential truths to the universe.

The first is that the jelly will never spread evenly on your toast no matter how hard you try.

The second is that everything becomes more interesting during exam week. Including Australian puzzle hunts. Yes, me and a collective of eight or nine friends decided to take on the Melbourne University Mathematics and Statistics Society's Annual Puzzle Hunt.

This was, needless to say, a very bad idea.

The puzzles are extremely difficult, each in a different way. Look at any of them and you'll understand why. You have no idea what you're looking for. There are no prompts, no questions. You have to notice that each wheel has 26 spokes and infer that each contains a letter of the English alphabet. Or that certain Chinese letters read out as English words when rotated counterclockwise. Last year, myself and a team of six managed to solved a total of zero puzzles.

Zero out of twenty.

Despite this, I convinced a bunch of people to participate and we (somewhat begrudgingly) assembled an odd team of some nine people. Given last year's brilliant score, I wasn't particularly optimistic.

We wrecked. We solved ten out of twenty puzzles, on par with the MIT team, and landed 54th place out of 219 teams.

I had known previously that a lot of those in my group were rather smart but it wasn't ever as apparent as during the puzzlehunt. One guy identified eight buildings based on their overhead street maps. Two others programmed bruteforce algorithms for a network. Another guy converted 2D animations of what he described as "pokemon MRI's" and created full 3D models out of them. Yet another recognized what sort of looked to me like a barcode to be an abstract rendition of Whistler's Mother. Same guy took what we had of a country code (an I and a P?) and correctly guessed "Swedish Meatballs" five minutes before the deadline.

All in all a really excellent experience, and I'd encourage anyone to participate.

Wednesday, April 17, 2013

Awful Jokes Bot has a home!

Finally set up @AwfulJokesBot to generate a joke every four hours.

Special thanks to orange for reminding me that it's a thing. Also thanks to Message Intercepted for an optimized rewrite and the staff of A Small Orange for some of the best tech support I've ever had.

Special thanks to orange for reminding me that it's a thing. Also thanks to Message Intercepted for an optimized rewrite and the staff of A Small Orange for some of the best tech support I've ever had.

Friday, April 12, 2013

Spambot Trap

The Earth is flat. Obama is actually part of the Taliban. The Holocaust never happened. Stalin is alive and disguised as a member of Congress. Area 51 is used as a means of contacting with an underground civilization of mole people that constantly break into people's homes and relocate remotes and car keys. We never should have abolished slavery, and we never visited the moon; all of the photos were faked.

(This is a spambot trap. I've noticed a recent increase in bot comments on my blog and want to see their opinions. Should be interesting).

(This is a spambot trap. I've noticed a recent increase in bot comments on my blog and want to see their opinions. Should be interesting).

Monday, March 18, 2013

GitHub Dump

I needed to upload a bunch of old projects for some new employment things onto GitHub and they're not really complete or anything but here they are anyways.

NeuralAPI

FacialRecognitionHKM

WebcamDraw

More of a personal post for my own organization, feel free to ignore.

NeuralAPI

FacialRecognitionHKM

WebcamDraw

More of a personal post for my own organization, feel free to ignore.

Pannenkoekenhuis (Windows Phone) : Published!

Pannenkoekenhuis : Windows Phone Store

Free for probably indefinitely. Comment / email me with suggestions and bugs.

Free for probably indefinitely. Comment / email me with suggestions and bugs.

Friday, March 8, 2013

Starbucks

I haven't written a post in a while and this isn't particularly interesting but I thought I'd share.

Coffee is amazing. This is old news for most of you, but I've just started drinking coffee regularly and it's the best thing ever. After class I'll bike to Starbucks for an hour (it's right next to my school) and start working on stuff. I do freelance programming now. It's nice because you set your own hours and pick your own jobs and get an immediate paycheck once you finish. I count my salary in how many macchiatos I can buy with it. Drink coffee to work, and work to drink coffee.

Such is the life.

This one time there was a job interview happening a table across from me, and the interviewee, a male in his early 30's it looked, was trying to sell himself for a teaching position (or so it seemed -- they never said what it was for). I had been in the coffeehouse for a few hours by then and had watched the other interviewers come and go. The other two interviewees before him seemed very average; formal. But this guy. He was hard-pressed to show exactly how interactive he was and how his teaching style would be super progressive and active and super full of energy and how this was his dream job and how he would do everything for the

"Hey how are you!"

wut

I looked back down at my book, pretending that he wasn't talking to me even though he was speaking directly in my direction. Long awkward pause -- and then I realized this was part of his "super friendly guy" shtick for the interview. "Oh, hi." I waved kind of awkwardly, and he returned the awkwardness with a sort of half-laugh, and I left the coffeeshop astounded at my ability to ruin the interview of complete strangers without even trying.

So that is Starbucks.

Coffee is amazing. This is old news for most of you, but I've just started drinking coffee regularly and it's the best thing ever. After class I'll bike to Starbucks for an hour (it's right next to my school) and start working on stuff. I do freelance programming now. It's nice because you set your own hours and pick your own jobs and get an immediate paycheck once you finish. I count my salary in how many macchiatos I can buy with it. Drink coffee to work, and work to drink coffee.

Such is the life.

This one time there was a job interview happening a table across from me, and the interviewee, a male in his early 30's it looked, was trying to sell himself for a teaching position (or so it seemed -- they never said what it was for). I had been in the coffeehouse for a few hours by then and had watched the other interviewers come and go. The other two interviewees before him seemed very average; formal. But this guy. He was hard-pressed to show exactly how interactive he was and how his teaching style would be super progressive and active and super full of energy and how this was his dream job and how he would do everything for the

"Hey how are you!"

wut

I looked back down at my book, pretending that he wasn't talking to me even though he was speaking directly in my direction. Long awkward pause -- and then I realized this was part of his "super friendly guy" shtick for the interview. "Oh, hi." I waved kind of awkwardly, and he returned the awkwardness with a sort of half-laugh, and I left the coffeeshop astounded at my ability to ruin the interview of complete strangers without even trying.

So that is Starbucks.

Friday, March 1, 2013

Pannenkoekenhuis (Windows Phone) : Closed Beta

None of you believed me when I said I'd actually get something on mobile. None. Zero. Well, guess what?

I showed up all of you.

Pannenkoekenhuis is now in closed beta on Windows Phone. If you own one, toss me your Microsoft Account email address and I'll send you a copy for review!

I'm expecting to release it to the general public in about a week. Until then, I suppose.

(Many thanks to Message Intercepted for the music!)

Thursday, February 7, 2013

raocow : "Diagnosis" Video Review

The awesome raocow made a review of Diagnosis today and it's really funny. He also reviewed Psycho Powers by a friend.

I'm really happy that there are people dedicated to the indie game community like this; raocow's an awesomely good-humoured guy and it's fun watching his reactions.

Go subscribe to him!

Tuesday, February 5, 2013

Tuesday, January 22, 2013

C++ : Facial Detection - Viola-Jones

So I completely abandoned what I had earlier because it wasn't great; here's another attempt using the well-known Viola-Jones paper.

The algorithm is fairly straightforward. Imagine you have a few hundred images of faces (24 px by 24 px) and a thousand times more images of non-faces (also 24 px by 24 px). The program first tries to "learn" a classifier based on Haar-like rectangular features. A "feature" is essentially all of the pixels in one area of the image added together, minus the pixels in another area added together. The "weak classifier" takes this value and classifies it as "face" or "not face" based on a threshold and a polarity. So, if your threshold is 50, and your polarity is "greater than," the weak classifier will classify an image as a face if the sum of one area minus the sum of another is "greater than 50."

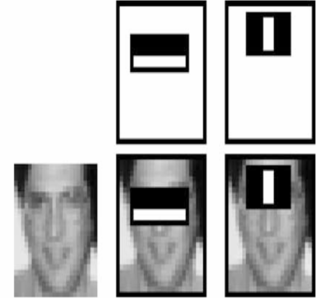

In the above image, you'd sum up the pixels in the white rectangle minus the pixels in the black rectangles for your feature value. The weak classifier returns a 1 or a 0, depending on whether this value is greater than or less than (depending on your polarity) the threshold for that classifier (1 represents "face," 0 represents "not face").

You can see in general how it works; the eyebrows are typically darker than the cheeks, and the bridge of the nose is typically brighter than the two sides of the face around it. The classifier is considered "weak" because it just so happens that no single classifier is very good at recognizing faces. The "strong classifier" comes from combining a lot of weak classifiers together based on their weighted errors (a somewhat complex process that you'll want to look in the paper for).

In order to find the weak classifiers, the algorithm uses AdaBoost, which essentially brute-forces all possible feature/polarity/threshold combinations for the one that mis-classifies the smallest number of images. This is sadly an O(AB) process, where A is the number of possible features (160,000+) and B is the number of face/non-face images (at least 20000). (Of course, this process is also repeated for the number of weak classifiers you are finding, so it might as well be O(ABC), where C is the number of classifiers). This is something like 3,200,000,000 operations, not counting K.

There are some optimizations one can make. Finding the sum of the pixels in any rectangle can be done in constant time (O(1)) if you use an integral image. That is, create an integral image where each pixel represents the sum of all the pixels to the top and left of itself.

The sum of the rectangle ABCD, above, is then just C - B - D + A. This is given by the fact that the rectangle at point C is all of the area from the top-left corner to C. Cutting off D and B (same principle) means we subtract the area represented by A twice; we compensate by adding A at the end. Hence, it becomes very easy to find the sum of any rectangle. (Constructing the integral image is another story -- you have to iterate through every pixel -- although since rectangles overlap there are some optimizations you can make there as well).

In a nutshell:

The algorithm is fairly straightforward. Imagine you have a few hundred images of faces (24 px by 24 px) and a thousand times more images of non-faces (also 24 px by 24 px). The program first tries to "learn" a classifier based on Haar-like rectangular features. A "feature" is essentially all of the pixels in one area of the image added together, minus the pixels in another area added together. The "weak classifier" takes this value and classifies it as "face" or "not face" based on a threshold and a polarity. So, if your threshold is 50, and your polarity is "greater than," the weak classifier will classify an image as a face if the sum of one area minus the sum of another is "greater than 50."

|

| Image by Viola-Jones, examples of Haar-like features |

You can see in general how it works; the eyebrows are typically darker than the cheeks, and the bridge of the nose is typically brighter than the two sides of the face around it. The classifier is considered "weak" because it just so happens that no single classifier is very good at recognizing faces. The "strong classifier" comes from combining a lot of weak classifiers together based on their weighted errors (a somewhat complex process that you'll want to look in the paper for).

In order to find the weak classifiers, the algorithm uses AdaBoost, which essentially brute-forces all possible feature/polarity/threshold combinations for the one that mis-classifies the smallest number of images. This is sadly an O(AB) process, where A is the number of possible features (160,000+) and B is the number of face/non-face images (at least 20000). (Of course, this process is also repeated for the number of weak classifiers you are finding, so it might as well be O(ABC), where C is the number of classifiers). This is something like 3,200,000,000 operations, not counting K.

There are some optimizations one can make. Finding the sum of the pixels in any rectangle can be done in constant time (O(1)) if you use an integral image. That is, create an integral image where each pixel represents the sum of all the pixels to the top and left of itself.

|

| Image by Chuan-Heng Hsiao and Amy Dai |

In a nutshell:

- Find a bunch of rectangular features that determine face-ly-ness of an image by brute-force en masse.

- Combine the features and their weighted errors to make a strong classifier.

Problems:

Horribly slow for the aformentioned reason (on the magnitude of days to weeks to train).

There's an implementation in OpenCV for it but I might GitHub my own implementation. Granted, I'm not willing to test it given the long computational time. It's probably all wrong.

Images and papers credit given where due (Viola and Jones for Viola-Jones (surprise!) and Freund, Schapire for AdaBoost).

Sunday, January 20, 2013

Verizon App Challenge

Me and a few friends are doing this Verizon Innovative App Challenge thing where we think of something communities need and pitch the idea for an app, or something. So I made this video. Enjoy, I think.

Tuesday, January 15, 2013

C++ : Facial Detection

Making a facial detection engine for a freelancing job. Very interesting stuff. I'll try to make this as readable and not-technical as possible, but keep in mind it's still fairly involved.

The following is based on the paper "Scale Invariant Face Recognition Method Using Spectral Features of Log-Polar Image" (which I'll refer to as the Hiroshima paper from now on, because of the URL).

Problem Statement:

Given a sample of huge yearbook photos (I mean 5184 px by 3456 px), detect the location of the face and then crop an area around it for the yearbook. Provided are also finished samples that can be used for learning.

Criteria:

Algorithm:

The Hiroshima paper describes an algorithm that's actually very straightforward.

The following is based on the paper "Scale Invariant Face Recognition Method Using Spectral Features of Log-Polar Image" (which I'll refer to as the Hiroshima paper from now on, because of the URL).

Problem Statement:

Given a sample of huge yearbook photos (I mean 5184 px by 3456 px), detect the location of the face and then crop an area around it for the yearbook. Provided are also finished samples that can be used for learning.

Criteria:

- The program should be reasonably fast, as there are very many pictures to process.

- Accuracy should be paramount even at the sacrifice of speed.

- Each photo has one face and one face only; therefore the program only needs to identify where the face is.

- The program should be able to rotate the image if necessary.

- Lighting conditions are generally standardized; the program is not responsible for editing apart from cropping and rotating.

Algorithm:

The Hiroshima paper describes an algorithm that's actually very straightforward.

- Convert each image into Log-Polar form for better representation.

- Take each pixel and find the corresponding polar coordinate at (exp(radius), angle). The radius should be the x-axis while the angle should be the y-axis. This ensures that any changes in scaling with the original image will only be represented along the x-axis, while any changes in rotation will go along the y-axis.

- Process each Log-Polar image for spectral features in the horizontal direction.

- In essence, you're looking at the autocorrelation of each row, processing each pixel as a cross-correlation with every other pixel. This helps to provide scale-invariance; since scale is represented only in the horizontal axis, the autocorrelation along that axis will not reflect changes in scale.

Note: This is a property of autocorrelations, but not something I really understand. - Construct a "mean face"; that is, many photos of faces aligned on top of each other in grayscale. Convert to Log-Polar and spectral features with the same process.

- Get the total covariance between the spectral features of the mean face and a sliding window of the same size of the image.

- Get the size of the mean face window.

- Move it around to every possible position inside of the original image. Crop this area and get the spectral features via the process above.

- Get the covariance of each pixel between the images, totalling them up. This step is something I came up with, with no real knowledge of statistics, and it's probably completely bogus. It is not outlined in the Hiroshima paper, which opted for Linear Discriminant Analysis instead. Makes sense, since the paper is interested more in detecting whether or not there's a face and less of where the face actually is.

- Lowest covariance means best match. If necessary, use a mean-shift algorithm to converge to the best point.

- Again, this is completely bogus and should be taken with a grain of salt. I don't know if one is supposed to add covariances like that.

Problems:

- Finding the spectral image of an image is an O(n^2) process via the naive way. It's very slow. Hence, running it for every possible window in Step 4 in an image is completely insane and the algorithm is very slow.

- Not actually sure if covariances help to determine better matches or not; seems to be a hit-and-miss with my results.

- Searching for the lowest covariance without looking at the surrounding neighborhood is very bad, especially if there's a huge false positive off in the corner of the picture or something. The mean-shift helps to compensate but not completely.

- Not rotation-invariant.

Optimizations:

- Instead of finding autocorrelations in Step 4 the naive way (which is O(n^2) ), one can use a property of Fourier transforms to speed it up to O(n log n). The optimization is based on the Wiener-Khinchin theorem, which states that any autocorrelation is simply the Fourier transform of the absolute square of the sample function. The wiki article explains it better.

- Scale down your images to something manageable before scaling up.

Results:

Haven't had a chance to test extensively, but it got one sample spot-on and it hit the eyebrows of another. My implementation of the Wiener-Khinchin theorem is off as I'm not getting the same results. Needs work.

I'd post pictures if I was allowed to (don't have permission from the client). Might end up dumping this one and using a different algorithm instead.

Sorry, I'm really geeking out today.

Tuesday, January 8, 2013

Python : Awful Jokes Bot

Made a Twitter app to generate and post awful jokes via Python.

#awfuljokesbot

Was a pretty interesting experiment, thought I'd share how it works:

The bot has a giant nouns.txt file stored locally, where it picks out two random nouns. The two nouns are translated into ARPABET using a lookup table (O(n) time; a binary search can cut it down to O(log n) but I'm lazy).

The first and last few phonemes are matched between the two words (the prefix of one and the suffix of the other), and if there's a match, combine the two words. E.g., 'napkin' and 'instrument' make 'napkininstrument.'

In order to remove the shared phonemes, the program estimates how many consonants to remove by counting consonants in the phonemes. It's very sketchy and a syllabic approach would be much better.

You end up getting 'napkinstrument' if all goes well.

Now, to generate the joke (yes, it goes backwards), the program looks up the Wikipedia articles of the two words and tries to find the most frequent nouns on the page (which will hopefully be keywords). Often times, the word will be the same (one of the most common words on the "napkin" wiki page happens to be "napkin").

In this case, the program generated "napkin" and "castanet."

Putting it together:

What do you get when you cross a castanet and a napkin? A napkinstrument!

The darker side:

The bot sucks. A lot. Sometimes, it just happens to work very well, but there's a bunch of issues with it.

#awfuljokesbot

Was a pretty interesting experiment, thought I'd share how it works:

The bot has a giant nouns.txt file stored locally, where it picks out two random nouns. The two nouns are translated into ARPABET using a lookup table (O(n) time; a binary search can cut it down to O(log n) but I'm lazy).

The first and last few phonemes are matched between the two words (the prefix of one and the suffix of the other), and if there's a match, combine the two words. E.g., 'napkin' and 'instrument' make 'napkininstrument.'

In order to remove the shared phonemes, the program estimates how many consonants to remove by counting consonants in the phonemes. It's very sketchy and a syllabic approach would be much better.

You end up getting 'napkinstrument' if all goes well.

Now, to generate the joke (yes, it goes backwards), the program looks up the Wikipedia articles of the two words and tries to find the most frequent nouns on the page (which will hopefully be keywords). Often times, the word will be the same (one of the most common words on the "napkin" wiki page happens to be "napkin").

In this case, the program generated "napkin" and "castanet."

Putting it together:

What do you get when you cross a castanet and a napkin? A napkinstrument!

The darker side:

The bot sucks. A lot. Sometimes, it just happens to work very well, but there's a bunch of issues with it.

- Skimming Wikipedia for keywords is not reliable. Often times you get words that aren't even remotely related. It might be interesting to, instead of using an arbitrary threshold like I do now (take the five most frequent nouns in the article and pick one randomly), take into account the frequencies of the nouns, and to use the more frequent ones more often.

- Word truncation based on consonants in phonemes is not a good idea, especially because of blends. This should be intuitive enough; everything about it smells of hack. A better way would be to count syllables, or somehow convert phonemes into words without relying on a dictionary.

- Certain words appear too often. The bot has a "may" fetish, for example, because "may" is both a noun and a (participle?), and so when it skims Wiki articles, it tends to choose that a lot.

That's all, folks. The bot currently uses someone else's code for the Wiki search; if I ever replace that I'll GitHub the entire thing and you can generate awful jokes right on your silly machines.

Subscribe to:

Posts (Atom)